Learning Avoidance

However, while users might have a mathematically true ROI from learning more about user interfaces, the ROI might not be so clear from a behavioral standpoint. The problem is that the investment occurs immediately: users must suffer the interaction cost of navigating through obscure parts of the user interface. In contrast, the benefit is deferred: users realize it only in small increments in some undefined future moments when they might use newly discovered features.

This is a type of behavior I see constantly.1 Rather than doing extra upfront work that will provide benefits in the long run, people will continually pick the choice that is easier in the short run.

For example, when encountering a bug in an application, users typically tend to find a workaround, and then tend to just keep doing that workaround, rather than submitting a bug report which might result in a better long-term outcome.

Another example: whenever possible, people will use applications or app features they're familiar with, rather than learning new applications or features — even if what they already know is only barely suitable for the task they're performing. A particularly extreme example I've experienced recently was a user who would do spreadsheet-like calculations in Word. The user would enter the numbers into Word, columns delimited by tabs, and then use a calculator app on an iPhone to do calculations.

Rather than learning how to use an application like Excel, it was easier to combine two familiar applications: Word and an iPhone calculator app.

As a result of this behavior, many users are perennial beginners. They reach a basic skill level that allows them to get the job done, but then stop learning.

Nielsen continues:

People don't read manuals. People don't go exploring all over the user interface in search of neat features. People don't investigate whether there's a better way of doing something once they’ve learned an approach that works. (Maybe you do these things, but you’re not an average user.)

Learning is hard work, and users don't want to do it.

To remedy this, Nielsen suggests a number of things UI designers can do. I particularly like these three:

Just-in-time learning

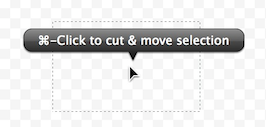

Since users don't read manuals, you should design the UI so that it is self-documenting. Sometimes, this means using clear, plain, self-explanatory language in your user interface. Other times, it can go as far as including documentation right in the application itself, where and when people actually need it, rather than in an external, separate manual.

I particularly like how Acorn automatically shows contextual help popups while you use it.2

Exploit teachable moments

When things go wrong, don't just point out that things whent wrong. Explain why they go wrong, and what the user can do to fix the problem.

Appway, a process management software I work on, constantly validates processes while the user is working on them. An unintrusive widget tells the user how well she is currently doing, a bit like playing a game. The more potential problems there are in a process, the more dire the widget's icon becomes. When asked, Appway then suggests actions to fix these problems.

Forgiveness

If users trust that mistakes won't be punished, they're more likely to explore your application, and thus more likely to learn how to use it better. This doesn't just mean allowing undo. BBEdit, for example, can keep a history of the text files it edits, so that you can go back to earlier versions even after the app was restarted.

You can find more strategies in Nielsen's article.

-

Both in other people, as well as in myself. ↩︎

-

Cleverly, Acorn uses the same popups to prevent mode errors. Since the popups communicate what tool you're currently using, you're never confused about the mode the application is in. I've written about this in my book. ↩︎

If you require a short url to link to this article, please use http://ignco.de/556