Measuring Design (or: You're not Google)

There has been a lot of brouhaha about Google's design process. It all started when Doug Bowman left Google. He wrote:

When a company is filled with engineers, it turns to engineering to solve problems. Reduce each decision to a simple logic problem. Remove all subjectivity and just look at the data. Data in your favor? Ok, launch it. Data shows negative effects? Back to the drawing board. And that data eventually becomes a crutch for every decision, paralyzing the company and preventing it from making any daring design decisions.

This lead to a number of blog posts complaining about Google's process, from the strangely confusing to the thoughtful and interesting. Scott Stevenson's post sums up what many have written:

Visual design is often the polar opposite of engineering: trading hard edges for subjective decisions based on gut feelings and personal experiences. It's messy, unpredictable, and notoriously hard to measure. The apparently erratic behavior of artists drives engineers bananas. Their decisions seem arbitrary and risk everything with no guaranteed benefit.

There are three problems with the criticism of Google: First, you're talking about different things. Second, it's a false dichotomy, and third, you're not Google anyways.

You're talking about different things

I just read the New York Times article on Douglas Bowman's departure from Google which made the problem painfully obvious in the first two sentences:

Can a company blunt its innovation edge if it listens to its customers too closely? Can its products become dull if they are tailored to match exactly what users say they want?

Bowman himself also seems to talk about user feedback when he is quoted:

"Using data is fundamental to what we do," Mr. Bowman said. "But we take all that with a grain of salt. Anytime you make design changes, the most vocal people are the ones who dislike what you've done. We don't just throw the numbers in a spreadsheet."

But that's not the kind of data Google is talking about. The Times article actually explains what Google does:

Before he could decide whether a line on a Web page should be three, four or five pixels wide, for example, he had to put up test versions of all three pages on the Web. Different groups of users would see different versions, and their clicking behavior, or the amount of time they spent on a page, would help pick a winner.

The Times conflates "popular design" and "successful design." Bowman talks about vocal users who complain about design changes. Google talks about measuring actual user success rates. It's obvious why the two parties can't agree on how to use data when designing; they're talking about fundamentally different kinds of data.

This misrepresentation of what Google actually does is reflected in a lot of blog posts on the subject. For example, Scott Stevenson writes:

An experienced designer knows that humans do not operate solely on reason and logic. They're heavily influenced by emotions and perceptions. Even more frustratingly, they often lie to you about their reactions because they don't want to be seen as imperfect.

This is absolutely true, but it assumes that Google actually asks its users what they think. I don't know how exactly Google measures these things, but I would assume that in the popular example of the 41 different shades of blue, Google didn't ask its users what shade of blue they liked best; instead, they created 41 different versions of the site and measured which one lead to the highest rate of user success (i.e. the percentage of users actually reaching their intended goal and the time it took on average to reach that goal using the given version of the site).

User feedback is useful, but it's not trustworthy data. The data Google talks about is not user feedback, it's hard data from actual usability tests. You can never trust what users say, you can only trust what they do. Nielsen says:

To design an easy-to-use interface, pay attention at what people do, not what they say.

So before we even start talking about how data and design interact, we have to clearly define what data we're talking about. It's not an angry user sending you an e-mail telling you that he doesn't like the shade of blue you're using. We're talking about things like how long on average it takes your users to reach a given goal using your design, or how many users give up before they reach their goal.

How many users manage to find your store's location on your website? How many users abandon their shopping cart because they can't figure out how to calculate shipping cost? This is useful data, and it can help you improve your design.

It's a false Dichotomy

The people who criticise Google seem to assume that you can either give designers absolute creative freedom, or you can dictate design using numbers, killing all creative freedom. That's not how it works. Creativity thrives in restrictions. In fact, it's the very job of a designer to come up with the best possible solution for any given design problem within the given restrictions. And the restrictions typically includes those pesky users who never quite behave the way you want them to.

Interaction designers don't design in a vacuum or for an art gallery. Their designs are used by thousands or even millions of people. These people have to be able to figure out how to use the designs. And since humans are often unpredictable, this means that designs have to be tested. Darren Geraghty had this apt unattributed quote on his Twitter stream:

Design is the art of gradually applying constraints until only one solution remains

Users are one of the most important constraints designers have to take into account.

In the Times article, Bowman says:

Data eventually becomes a crutch for every decision, paralyzing the company and preventing it from making any daring design decisions.

I really don't know what's happening inside of Google, but the way I read it, Bowman basically says "measuring whether users can successfully use a design makes it impossible to create a truly great design." Perhaps when measuring is overdone, that is the case. By testing every single changed pixel, changes become virtually impossible. But that doesn't mean that you should avoid testing: whether users can actually successfully use a design is its most important attribute. If you don't measure whether your design works, it's very likely not a great design. And it doesn't mean that you can't have daring designs: Whether a design is daring is a completely orthogonal question to its usability; both daring designs and boring designs can be good designs if users can successfully use them.

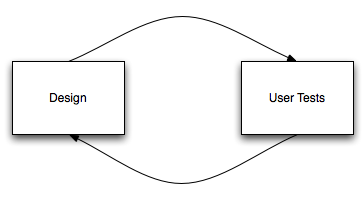

User test data is not a crutch, it's valuable feedback telling you whether you're going in the right direction. Designing and testing are not opposites, they are interacting agents.

First, you design. This is the creative part. Then, you do usability tests, you measure your design. What did and did not work? That's the data. This data does not come at the expense of human experience, it is the human experience. The human experience is a design restriction, just like the browser is a design restriction for web design, and paper is a design restriction of print design. Just like your design has to exist within the restrictions of a web browser, it has to exist within the restrictions of actual humans using it. Now that you have the data of what works and what doesn't, you go back to designing, to the creative part, to the task of creating the best design you possibly can for that particular problem.

If either the designer or the data is missing, the result will not be great.1

Design is not held back by data, design is refined and perfected by data.

You're not Google

Hundreds of millions of people visit Google each month. If one one shade of blue leads to 0.001% fewer user errors, that may result in 2000 people who don't experience that particular problem each month!

In your case, 0.001% difference in user success rate doesn't matter. In Google's case, it absolutely does. In your case, each user taking a second longer on average to reach his goal doesn't matter. In Google's case, that's a 50'000 wasted hours each month!

Apple is another example of this. They come up with hundreds of prototypes for every new product, because every single issue the final device has - however unlikely and small - will occur many times if you have millions of users. The same applies to Microsoft, and Adobe, and Mozilla. But it probably doesn't apply to you.

Going back to Bowman's quote, he wrote:

Data in your favor? Ok, launch it. Data shows negative effects? Back to the drawing board.

To understand why Google operates like this, you have to understand the scale of what they do. "Data showing negative effects" may mean that, using the new design, tens of thousands of users may suddenly not be able to reach their goals.

This probably doesn't apply to you, so you don't have to do what Google does.

What you should do

The fact that Apple and Google and other huge companies measure their design in this way does not mean that you should do the same. Instead of measuring a dozen different versions of your design with hundreds of users, create one, two or at most three versions, test them with few users (possibly using a simple usability testing app like Silverback) and iterate. Don't worry about what Google is doing.

Two last points

I'd like to go back to Stevenson's quote from the very beginning of this article, where he wrote:

Visual design is often the polar opposite of engineering: trading hard edges for subjective decisions based on gut feelings and personal experiences. It's messy, unpredictable, and notoriously hard to measure. The apparently erratic behavior of artists drives engineers bananas. Their decisions seem arbitrary and risk everything with no guaranteed benefit.

I feel that I have to add two last points here.

- Software Engineering and Visual Design are closer to each other than Stevenson implies. Visual Designers go through a creative process in which they create an artefact that is executed by users. Software Engineers go through a creative process in which they create an artefact that is executed by processors. Both have a creative part (where you come up with clever solutions to given problems) and a testing and measuring part (where you figure out whether your solutions actually solve the given problems and go back to part 1 if they don't).

- User interaction is not hard to test. It's very easy to find a few people, sit them in front of your solution, and see whether they're actually capable of figuring out how to use it. Sure, the apparently erratic behavior of users drives artists bananas, but that doesn't mean it's not valid feedback. It's also not particularly difficult to get hard data on design. What do users click on? At what stage do they abandon their shopping carts? What terms do they enter into the search box, and do they get any meaningful results for those terms? These things can be measured easily and cheaply on any running system.

And one final paragraph

Note that I am neither defending nor attacking Google or Bowman. Is what Google does overkill? I don't know. Does their process kill the very possibility of ever having great design? Possibly. Do they really need to test the difference between a 2-Pixel-Border and a 3-Pixel-Border? Probably not. But I've never done a project anywhere near Google's scale. I simply don't know whether what Google does is necessary or insane. Are designers right to complain about what Google does? Perhaps they are, but that doesn't mean that testing and measuring your design is unnecessary. Measuring design is not the antithesis of design, it's a tool that every designer should have in his or her tool chest.

Further Reading

Mike West has written a fantastic followup to this post, expanding on some of my points and calling me out on some others, especially on not always clearly differentiating betwen A/B testing (which gives purely objective results and can only really be used to test small differences between designs) and usability testing (which produces both objective and subjective feedback and can be used to test big changes). Three choice quotes:

In general, engineers understand and can relate well to automated A/B testing, and designers understand and can relate well to more personal usability testing. The two are, however, not the same, don't provide the same data, and ought not be conflated.

(...)

An insistance on A/B measurement of each change, no matter how small, means that everything you measure will be "trivial" in the way that the most glaring examples of "Googlism" have been: shades of colour, or widths of border. Only trivial changes are testable changes, everything else is fraught with layers of uncertainty

(...)

You aren't Google. Even if they're dangerously-reliant on A/B testing, you almost certainly aren't A/B testing enough. I know I'm not.

And now, go and read Mike's essay.

-

Google's design problem is not that they measure, Google's design problem is that the designers are missing; they only have one part of the equation, which makes the fact that Bowman left doubly problematic. ↩︎

If you require a short url to link to this article, please use http://ignco.de/94