Did you use ChatGPT?

It's common to downplay the impact that systems like ChatGPT will have by pointing out that, at most tasks, they aren't anywhere near as good as humans. What we're starting to find out is that they don't need to be. Most people are unable to tell the difference between skilled humans performing a task well, and an automated system performing it adequately.

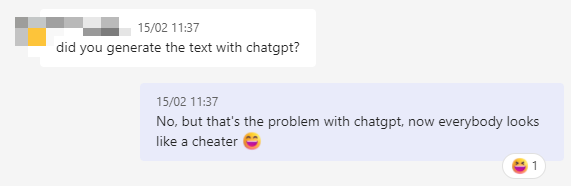

This means that people are already starting to accuse skilled humans of using ChatGPT, or similar systems. This Reddit thread seems to be an example, but of course, I'm not a poet, so I can't say for sure.

Short-term, tools like ChatGPT create distrust in skilled human work and devalue that work, even if, in reality, they aren't up to the task of matching the quality of the work produced by humans.

If people will accuse skilled humans of using ChatGPT, why use skilled humans in the first place? Adequate is good enough.

If you require a short url to link to this article, please use http://ignco.de/790